Whether you want to call data the gold or the oil of the 21st century, it is essential for all machine learning applications. That’s why in this chapter we will focus exclusively on how we can create a good information base for our models.

Some basic topics are essential to have a good infrastructure for machine learning:

- Procurement: There are various systems from which the facts can originate, for example from internal or external sources. Accordingly, physical access can also become problematic if one does not have the necessary authorizations.

- Quality: Once the information is available, it must be checked whether the data meets certain requirements in order to be usable for the use case. For example, the individual categories should not have too many missing values.

- Preparation: If the data quality is not sufficient, there are various methods to prepare the data set in such a way that it can still be used. In addition, the format (e.g. number format or the length of text entries) must be standardized to the form with which the model can work.

- Storage: If the file size exceeds a certain limit or the model is to be constantly retrained with current information, it is not sufficient to have the inputs in a file. Instead, a database solution should be used in order to have the statistics available centrally and to be able to query it more efficiently. Depending on the type and amount of information, there are different database solutions (e.g. MySQL).

These topics are significantly more comprehensive than they appear at first glance. In addition to the various software options offered in this area, we must also be able to statistically evaluate which changes we are allowed to make so as not to limit the validity of the AI model.

Some of our Articles in the field of Data

What is the Univariate Analysis?

Master Univariate Analysis: Dive Deep into Data with Visualization, and Python - Learn from In-Depth Examples and Hands-On Code.

What is OpenAPI?

Explore OpenAPI: A Comprehensive Guide to Building and Consuming RESTful APIs. Learn How to Design, Document, and Test APIs.

What is Data Governance?

Ensure the quality, availability, and integrity of your organization's data through effective data governance. Learn more here.

What is Data Quality?

Ensuring Data Quality: Importance, Challenges, and Best Practices. Learn how to maintain high-quality data to drive better business decisions.

What is Data Imputation?

Impute missing values with data imputation techniques. Optimize data quality and learn more about the techniques and importance.

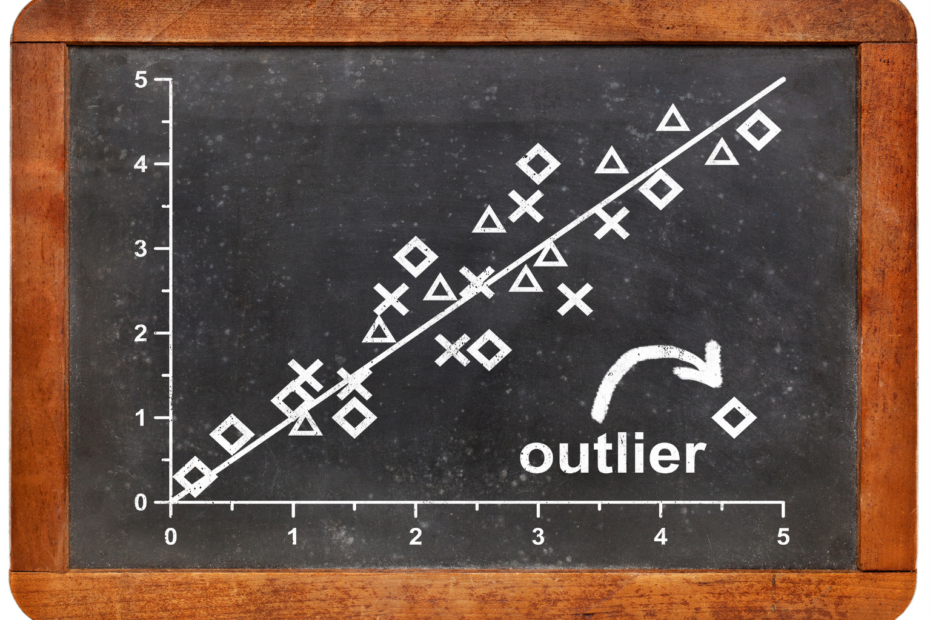

What is Outlier Detection?

Discover hidden anomalies in your data with advanced outlier detection techniques. Improve decision-making and uncover valuable insights.

Data Set Size for Machine Learning

The thesis that machine learning only delivers good results with very large data sets is still persistent. Although it cannot be denied that the training of models is significantly easier and involves less preliminary work, smaller sets are not a criterion for excluding machine learning. As a result, it is possible to program good and precise algorithms even for applications in which only a small amount of data is inherently generated or the information has only been measured and stored for a short time.

A classic example of this is image recognition. If we want to develop a model that determines whether there is a dog in an image or not, we will most likely not be able to avoid manually labeling a large number of images beforehand. Since this is not only a tedious but also very time-consuming work, we will probably not have access to a large amount of labeled images. Nevertheless, it is not impossible to write a comparatively robust algorithm with the few images we have.

This is made possible by so-called data augmentation methods. A single data set is modified in such a way that it can be used as two, three or four new sets. In this way, we artificially inflate the set size. In our example with the dog images, this means that we take a dog image and generate “new” images from it by using only certain parts of the image as a new Data Set or by rotating the image by a few degrees. In this way, we have generated new data sets of which we still know that there is a dog in it and from which the machine learning model can nevertheless draw new conclusions.

Conclusion

Data is a defining factor in today’s world. In our private environment, more and more personal information is collected via social media or other online accounts. In the business environment, we experience that significantly more data than ever is collected in order to make information-driven decisions and to be able to monitor the achievement of goals to date. Therefore, being able to deal with facts is an indispensable skill.