Apache Flink is an open-source, distributed, high-performance computing framework designed to process large-scale data streams and batch data. It is known for its ability to handle massive amounts of data while maintaining low latency and high throughput. Flink is built on the concept of Dataflow programming, where a program is represented as a directed graph of data transformations that can be executed in parallel.

Flink supports a wide range of data sources and sinks, including Apache Kafka, Apache Hadoop, and Amazon S3, making it a versatile tool for data processing and analysis. Flink was initially developed by the Apache Software Foundation in 2014 and has since gained widespread adoption among various organizations for use cases such as real-time analytics, fraud detection, and machine learning.

What does the architecture of Flink look like?

Apache Flink is an open-source, distributed processing engine designed to support batch processing and stream processing. The architecture of Apache Flink comprises multiple layers that work together to provide efficient and fault-tolerant data processing.

At the core of Apache Flink is its data processing engine, which includes a distributed dataflow engine that can handle both batch and stream processing. The engine is responsible for distributing the data processing tasks across a cluster of machines, managing the data streams, and providing fault tolerance.

Above the data processing engine, there are two main layers in the Apache Flink architecture: the APIs and the runtime components. The APIs layer provides a variety of programming interfaces for users to write data processing applications, including batch processing APIs, stream processing APIs, and Table APIs. These APIs are designed to be intuitive and easy to use, while still providing powerful features for advanced users.

The runtime components layer includes various modules responsible for executing the data processing applications on the cluster. These components include the JobManager, TaskManager, and ResourceManager. The JobManager is responsible for scheduling jobs, coordinating the distributed execution of tasks, and providing fault tolerance. The TaskManager is responsible for executing the individual tasks assigned to it by the JobManager, and the ResourceManager manages the resources of the cluster and allocates resources to tasks as needed.

Overall, the architecture of Apache Flink is designed to be highly flexible and scalable, allowing users to process large volumes of data quickly and efficiently while maintaining fault tolerance and reliability.

What are the key features of Apache Flink?

Apache Flink is a distributed stream processing framework with a rich set of features that make it suitable for various use cases. Some of the key features of Apache Flink are:

- Stream and Batch Processing: Flink supports both stream and batch processing, allowing developers to build data processing applications that can handle real-time data streams and batch data processing.

- Fault Tolerance: Apache Flink provides a fault-tolerant runtime that can handle machine and application failures without losing data or progress. The system automatically recovers from failures, minimizing downtime and data loss.

- High Throughput and Low Latency: Flink is designed to handle high-throughput and low-latency processing of data streams. The framework is optimized for low-latency processing, enabling sub-millisecond processing latencies.

- Event Time Processing: Flink provides support for processing data streams based on event time rather than processing time, which is essential for the correct handling of out-of-order data and handling of late data.

- Stateful Stream Processing: Flink allows developers to maintain state across data streams, which is essential for various use cases such as session windows, fraud detection, and more.

- Dynamic Scaling: It provides dynamic scaling capabilities, allowing developers to add or remove resources from the cluster on-demand, based on the workload.

- Rich APIs and Libraries: Flink offers a rich set of APIs and libraries for data processing, including Java and Scala APIs, SQL, and machine learning libraries such as FlinkML.

- Integrations: It integrates with various external systems such as Apache Kafka, Apache Cassandra, Apache Hadoop, and more, making it easier to build end-to-end data processing pipelines.

These features make Apache Flink a versatile and powerful framework for building complex data processing applications that require low-latency processing, fault tolerance, and dynamic scaling.

How is Flink integrated with other data processing tools?

Apache Flink is designed to work seamlessly with a variety of other data processing tools and technologies. Here are some of the common ways that Apache Flink can be integrated with other tools:

- Apache Kafka: Apache Kafka is a popular distributed streaming platform that is commonly used with Apache Flink. Flink can read data from Kafka topics and can also write data back to Kafka. This makes it easy to build end-to-end streaming data pipelines using Kafka and Flink.

- Apache Hadoop: Apache Flink can also integrate with Apache Hadoop, which is a popular big data processing framework. Flink can read and write data to HDFS (Hadoop Distributed File System) and can also run MapReduce jobs on Hadoop.

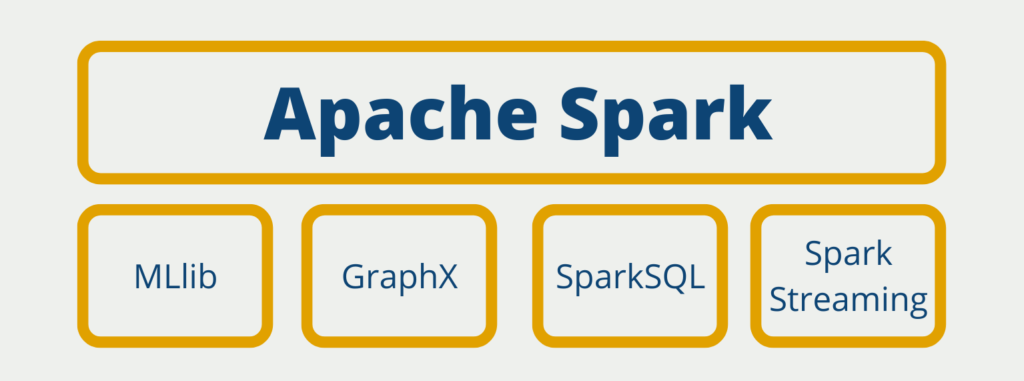

- Apache Spark: Apache Spark is another popular big data processing framework that can be integrated with Apache Flink. Both Spark and Flink can read and write data to Hadoop HDFS, and they can also share data using Apache Arrow format.

- Apache NiFi: Apache NiFi is a data integration tool that provides a web-based interface for designing and managing data flows. Apache Flink can read data from NiFi and process it in real-time.

- Apache Beam: Apache Beam is a unified programming model for batch and streaming data processing. Apache Flink can run Apache Beam pipelines natively, allowing users to take advantage of Beam’s portability and flexibility.

- SQL: Apache Flink also provides a SQL API that allows users to write SQL queries to analyze data in real-time. The SQL API supports standard SQL queries, as well as complex event processing (CEP) queries.

These integrations allow Apache Flink to be used in a wide variety of data processing use cases, including real-time data processing, machine learning, and ETL (extract, transform, load) pipelines.

How performant is Apache Flink?

Apache Flink is designed to provide efficient and high-performance data processing capabilities for big data applications. Some of the features that enable Flink to achieve high performance are:

- Streaming-first architecture: Flink has a streaming-first architecture, which means it is designed to handle data streams efficiently. It uses a dataflow engine that processes data in a continuous and incremental manner.

- Memory management: Flink’s memory management is optimized to handle large-scale data processing. It uses a hybrid memory management approach that combines managed memory and external memory.

- Operator chaining: Flink optimizes data processing by chaining together multiple operators and executing them as a single unit. This reduces the overhead of data serialization and deserialization.

- Stateful stream processing: Flink supports stateful stream processing, which allows it to maintain state across different processing windows. This enables Flink to perform complex processing operations on streaming data.

- Fault tolerance: Flink provides a fault-tolerant runtime that can handle node failures and network issues. It uses a checkpointing mechanism that periodically saves the state of the running application to a durable storage system.

- Native batch processing: Flink has a native batch processing mode that can handle batch jobs as well as streaming jobs. This provides a unified processing framework for both types of workloads.

- Dynamic scaling: Flink provides dynamic scaling of resources to handle varying workloads. It can scale up or down depending on the processing requirements of the application.

Overall, Flink’s performance is highly competitive with other popular big data processing frameworks such as Apache Spark and Apache Storm. Its streaming-first architecture and optimized memory management make it an excellent choice for high-performance data processing applications.

What are the applications of Apache Flink?

Apache Flink is a powerful data processing framework that has been used in various use cases across industries. Here are some examples of how Apache Flink has been used:

- Stream Processing: Flink has been used for real-time stream processing in a wide range of industries, including finance, telecommunications, and eCommerce. For example, Flink can be used to detect fraudulent transactions in real-time or to provide real-time recommendations to customers based on their browsing behavior.

- Batch Processing: Flink is also well-suited for batch processing, especially when dealing with large volumes of data. Flink can be used to process data in batches, such as for data warehousing and ETL (Extract, Transform, Load) jobs.

- Machine Learning: Flink also supports machine learning libraries, such as FlinkML, which allows users to build machine learning models on large datasets in a distributed environment.

- IoT: Flink has also been used in Internet of Things (IoT) applications, where it can be used to process data streams from sensors and other devices in real-time.

- Data Analytics: Flink can be used for various data analytics use cases, such as real-time data visualization, data aggregation, and anomaly detection.

- Data Integration: Flink can also be used for data integration, such as data migration and data synchronization, where data needs to be transferred from one system to another.

Overall, Apache Flink is a versatile framework that can be used in a wide range of use cases where fast, scalable, and fault-tolerant data processing is required.

This is what you should take with you

- Apache Flink enables the processing of massive data streams in real-time, providing low-latency and high-throughput capabilities.

- Flink’s fault-tolerant architecture ensures reliable and consistent processing even in the face of failures.

- The flexible programming model of Flink allows developers to write complex data processing pipelines with ease.

- Flink’s support for event time processing and windowing enables handling of out-of-order data and time-based aggregations.

- Flink’s extensive ecosystem integration with tools like Apache Kafka and Apache Hadoop makes it a versatile choice for various data processing scenarios.

- The rich set of connectors, libraries, and APIs provided by Flink simplifies integration with other systems and extends its functionality.

- Flink’s scalability allows for seamless handling of growing data volumes and processing requirements.

- Flink’s community-driven development and active user base ensure continuous improvement and support.

What is the Univariate Analysis?

Master Univariate Analysis: Dive Deep into Data with Visualization, and Python - Learn from In-Depth Examples and Hands-On Code.

What is OpenAPI?

Explore OpenAPI: A Comprehensive Guide to Building and Consuming RESTful APIs. Learn How to Design, Document, and Test APIs.

What is Data Governance?

Ensure the quality, availability, and integrity of your organization's data through effective data governance. Learn more here.

What is Data Quality?

Ensuring Data Quality: Importance, Challenges, and Best Practices. Learn how to maintain high-quality data to drive better business decisions.

What is Data Imputation?

Impute missing values with data imputation techniques. Optimize data quality and learn more about the techniques and importance.

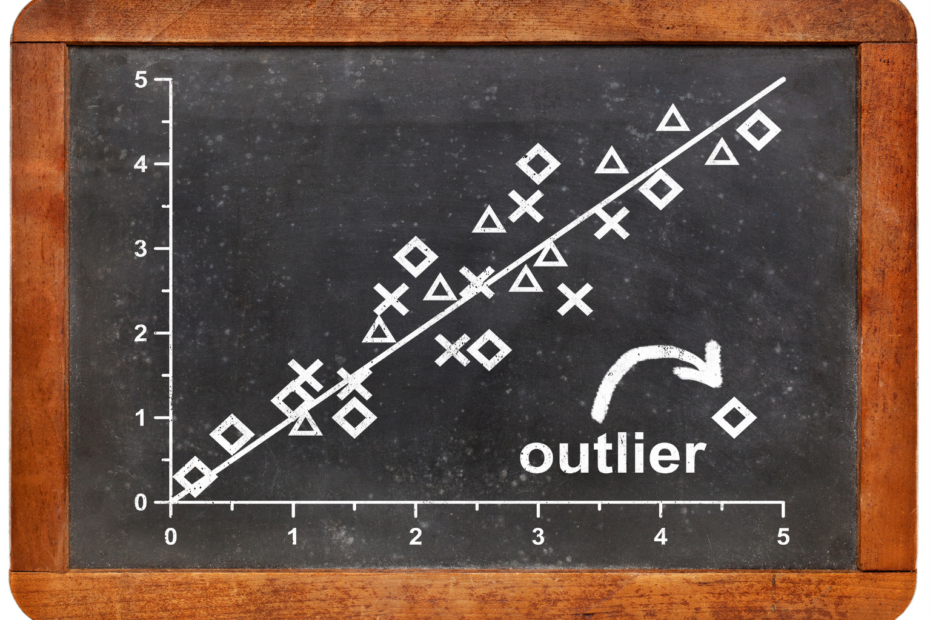

What is Outlier Detection?

Discover hidden anomalies in your data with advanced outlier detection techniques. Improve decision-making and uncover valuable insights.

Other Articles on the Topic of Apache Flink

You can find the official website of Apache Flink here.

Niklas Lang

I have been working as a machine learning engineer and software developer since 2020 and am passionate about the world of data, algorithms and software development. In addition to my work in the field, I teach at several German universities, including the IU International University of Applied Sciences and the Baden-Württemberg Cooperative State University, in the fields of data science, mathematics and business analytics.

My goal is to present complex topics such as statistics and machine learning in a way that makes them not only understandable, but also exciting and tangible. I combine practical experience from industry with sound theoretical foundations to prepare my students in the best possible way for the challenges of the data world.