Deep Learning is a method of information processing that analyzes large amounts of data using neural networks. This approach is largely modeled on the biological processes in the human brain, with the difference that processing such data sets would be nearly impossible for our brain.

How does Deep Learning work?

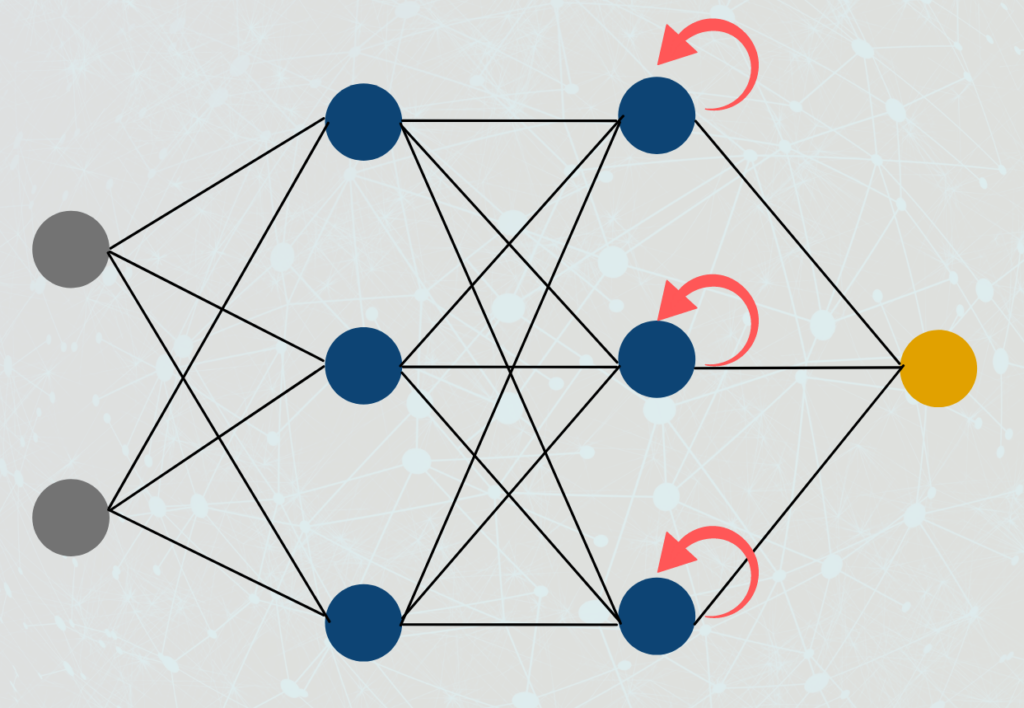

Deep Learning includes algorithms that are programmed to learn without human intervention. The technical basis of these programs are neural networks. These consist of many layers of neurons, just like our brain. In the input layer, all the information arrives that is to be processed. In our biological example, this would be the sensory impressions from eyes, fingers, etc. At the end of the network, one or more responses are resulting in the output layer, depending on the inputs. For example, if we see a lion in the immediate vicinity, our reaction is to quickly get to safety.

In order for this appropriate response to occur, we must process the inputs correctly. This happens in the layers between the input and output layers, the so-called hidden layers. Based on past experience, stronger or weaker connections form between neurons from different layers. The more intermediate layers a network has, the “deeper” it is. This is where the term “deep” learning comes from.

This example can be transferred almost one-to-one to the technical algorithm. We define a neural network with a certain number of layers and neurons. In most cases, more neurons can be used to learn more complex facts. So the more complex the use case, the larger the neural network. With the help of training data, the model then learns to link the correct neurons with each other, so that the correct relationship between model input and output is created. From the outside, we only specify what the correct prediction should look like. The model learns to make the right connections within the network on its own.

What are the different types of neural networks?

There are different types of neural networks used for different purposes in Deep Learning. Some of the most commonly used neural network types are:

- Feedforward Neural Networks: Feedforward neural networks are the simplest type of neural networks that have an input layer, one or more hidden layers, and an output layer. They are used for regression and classification tasks where the input and output data are well-defined.

- Convolutional Neural Networks (CNNs): CNNs are used for image and video recognition tasks. They have convolutional layers that can extract features from input images or videos, and pooling layers that can reduce the size of feature maps.

- Recurrent Neural Networks (RNNs): RNNs are used for processing sequential data such as text or speech. They have a feedback loop that allows them to process data that is temporally related.

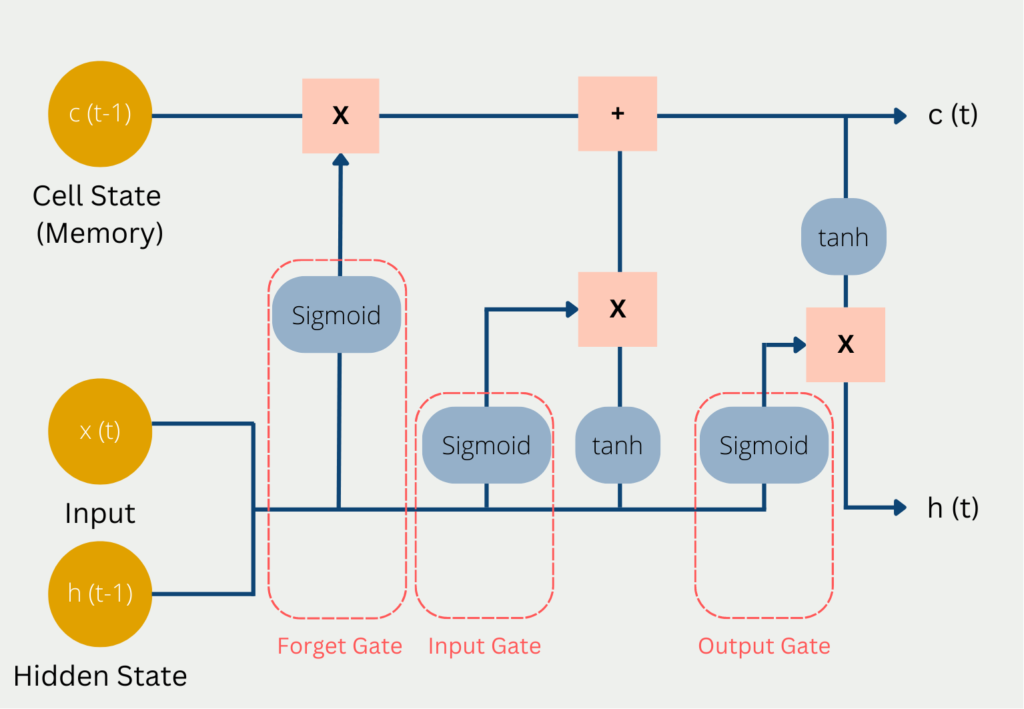

- Long Short-Term Memory (LSTM): LSTMs are a type of RNN specifically designed to avoid the vanishing gradient problem. They are used for speech recognition, natural language processing, and captioning.

- Autoencoder neural networks: Autoencoder neural networks are used for unsupervised learning tasks such as dimensionality reduction, feature extraction, and anomaly detection. They have an encoder that can map the input data to a low-dimensional space and a decoder that can reconstruct the original input data from the low-dimensional representation.

- Generative Adversarial Networks (GANs): GANs are used to generate new data that is similar to the training data. They consist of two neural networks – a generator, which can generate new data, and a discriminator, which can discriminate between the generated data and the real data.

Each type of neural network has its own strengths and weaknesses, and choosing the right type depends on the task at hand.

What are practical applications for Deep Learning?

Today, we already encounter neural networks unconsciously in everyday life. They attempt to learn correlations from past data that can be applied in future situations.

- Dynamic Pricing: This is about setting specific prices for the same products depending on the customer, country, or other circumstances. A few years ago, this was mainly limited to airlines, which adjusted flight prices accordingly the closer the departure date came. Today, this strategy is conceivable in many areas, for example in e-commerce, where customers are offered particularly favorable bundles to lure them back into the store.

- Product Recommendation: This is another use case that is primarily used in e-commerce and aims to suggest a suitable product to the customer base, for example, on their purchase history, search behavior, or other customer characteristics. In addition, such algorithms are also used by Netflix or Amazon Prime to suggest a suitable series or movie.

- Fraud Detection: This is the automated detection of conspicuous behavior of all kinds, which usually indicates misuse of the system. The most famous use case is bank accounts on which conspicuous debits or credit card transactions take place, which could indicate that the credit card has fallen into the wrong hands.

Deep Learning vs. Machine Learning

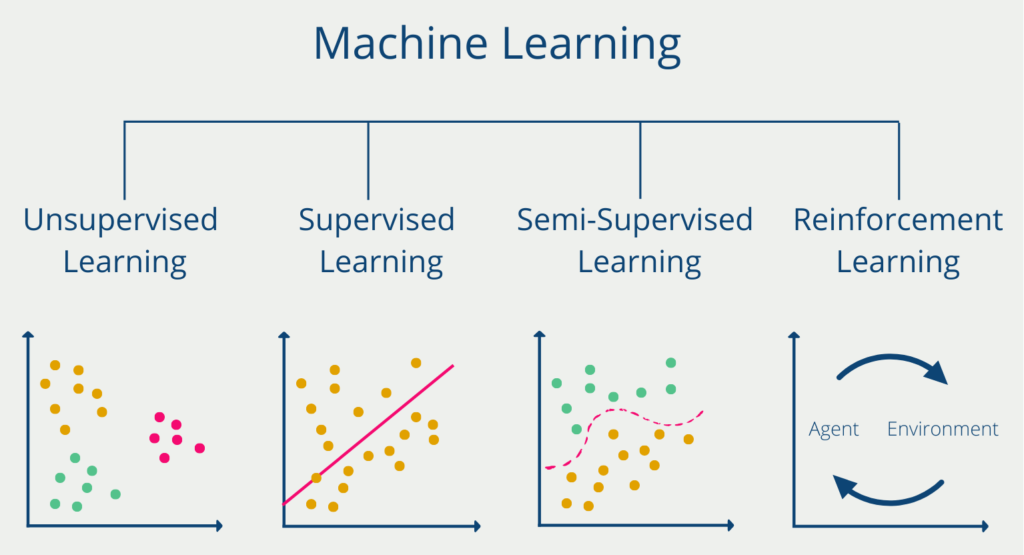

Deep Learning is a subfield of Machine Learning that differs from Machine Learning in that no human is involved in the learning process. This is based on the fact that only Deep Learning algorithms are able to process unstructured data, such as images, videos, or audio files. Other machine learning models, on the other hand, need the help of humans to process this data, telling them, for example, that there is a car in the image. Deep Learning algorithms, on the other hand, can automatically convert unstructured data into numerical values and then incorporate these into their predictions and recognize structures without any human interaction has taken place.

In addition, deep learning algorithms are able to process significantly larger amounts of data and thus also tackle more complex tasks than conventional machine learning models. However, this comes at the expense of a significantly longer training time for deep learning models. At the same time, these models are also very difficult to interpret. That is, we cannot understand how a neural network arrived at a good prediction.

What are the future topics in Deep Learning?

Deep Learning has become an indispensable tool in modern artificial intelligence and has revolutionized various fields such as image recognition, speech recognition, and natural language processing. However, there are still challenges that need to be overcome in this field. Here are some of the challenges and the future of Deep Learning:

- Data: Deep Learning algorithms require large amounts of data to learn patterns and make accurate predictions. However, obtaining and labeling data can be costly and time-consuming.

- Interpretability: Models can be difficult to interpret, making it difficult to determine the cause of errors and biases.

- Overfitting: Deep Learning models can sometimes overfit the data, meaning they become too complex and perform poorly on new data.

- Training time: The predictions created require a lot of computing power to be trained, making it difficult for smaller organizations and individuals to develop models.

- Generalization: The models trained on certain data may not be able to generalize to other data sets, resulting in lower performance.

- Attacks: Deep Learning models may be vulnerable to outside attacks in which malicious actors manipulate the input data to cause the model to make incorrect predictions.

Despite these challenges, the future of Deep Learning looks promising. Researchers are constantly developing new techniques to overcome these challenges and improve the accuracy and efficiency of Deep Learning models. Some of the areas where Deep Learning is expected to become more important in the future include:

- Personalized healthcare: Deep Learning models can analyze patient data to provide personalized treatments and diagnoses.

- Autonomous vehicles: Deep learning models can help autonomous vehicles detect and react to objects and obstacles in real time.

- Advanced robotics: Deep-learning models can help robots learn and adapt to new environments to improve their flexibility and effectiveness.

- Climate modeling: These models can help predict the impacts of climate change and identify mitigation strategies.

- Finance: Deep Learning models can analyze market trends and make predictions to improve investment decisions.

As Deep Learning continues to develop and mature, it is expected to become even more prevalent in our daily lives, changing the way we live, work

This is what you should take with you

- Deep Learning is a subarea of Machine Learning and describes a method of information processing.

- Neural networks in particular are used to exploit correlations from large data sets, which can then be applied in future situations.

- Deep Learning is already used today, for example, in product recommendations in e-commerce or in fraud detection in the banking sector.

- Deep Learning differs from Machine Learning primarily in that it can also handle unstructured data such as images, videos, or audio recordings.

What is a Boltzmann Machine?

Unlocking the Power of Boltzmann Machines: From Theory to Applications in Deep Learning. Explore their role in AI.

What is the Gini Impurity?

Explore Gini impurity: A crucial metric shaping decision trees in machine learning.

What is the Hessian Matrix?

Explore the Hessian matrix: its math, applications in optimization & machine learning, and real-world significance.

What is Early Stopping?

Master the art of Early Stopping: Prevent overfitting, save resources, and optimize your machine learning models.

What is RMSprop?

Master RMSprop optimization for neural networks. Explore RMSprop, math, applications, and hyperparameters in deep learning.

What is the Conjugate Gradient?

Explore Conjugate Gradient: Algorithm Description, Variants, Applications and Limitations.

Other Articles on the Topic of Deep Learning

- IBM has an exciting article describing other Deep Learning applications.

Niklas Lang

I have been working as a machine learning engineer and software developer since 2020 and am passionate about the world of data, algorithms and software development. In addition to my work in the field, I teach at several German universities, including the IU International University of Applied Sciences and the Baden-Württemberg Cooperative State University, in the fields of data science, mathematics and business analytics.

My goal is to present complex topics such as statistics and machine learning in a way that makes them not only understandable, but also exciting and tangible. I combine practical experience from industry with sound theoretical foundations to prepare my students in the best possible way for the challenges of the data world.